The Neuroscience of Language: How Your Brain Understands Words

Discovering the Remarkable System That Makes Human Communication Possible

Every moment you read these words, your brain performs an extraordinary feat that seems effortless but involves intricate coordination across multiple brain regions. Language understanding is one of the most complex cognitive processes humans engage in, yet we do it so naturally that we rarely consider the remarkable neural machinery at work. From the moment sound waves reach your ears to the instant you grasp meaning, your brain orchestrates a sophisticated sequence of operations that researchers are only now beginning to fully understand.

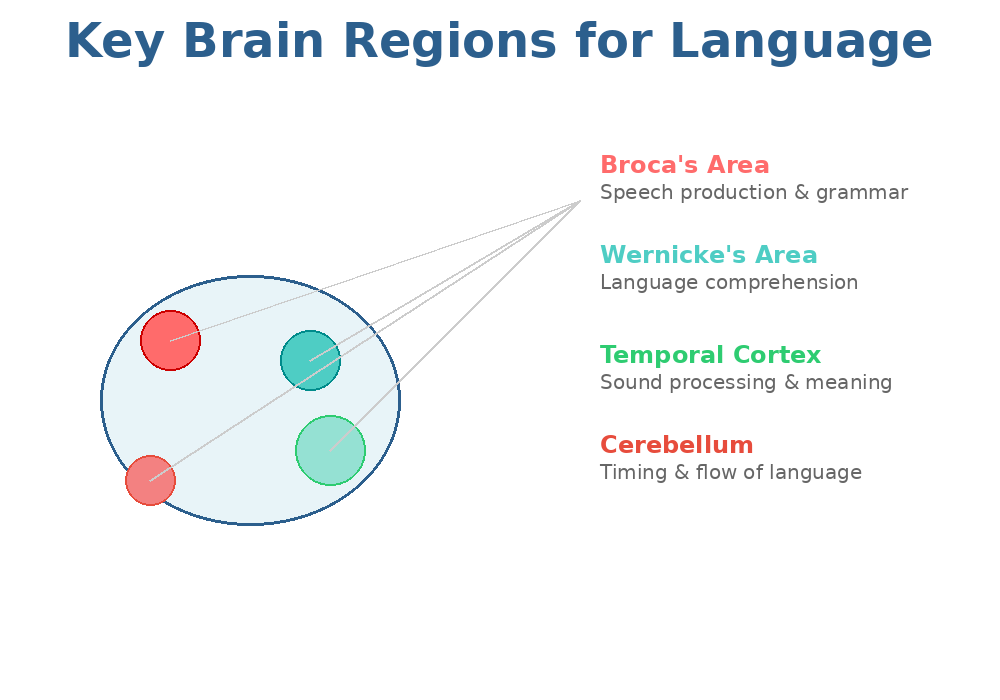

The Brain's Language Network: More Than Just Two Areas

For over a century, neuroscience textbooks have highlighted two key brain regions for language: Broca's area in the frontal lobe, responsible for speech production, and Wernicke's area in the temporal lobe, crucial for comprehension. While these regions remain important, cutting-edge research in 2026 reveals that language processing is far more distributed across the brain than previously thought.

Recent neuroimaging studies demonstrate that language is truly a whole-brain activity. When you listen to someone speak, process written text, or formulate your own sentences, your brain activates motor systems, auditory processing centers, visual networks, and memory regions simultaneously. This complexity challenges simplified models and suggests that effective language use requires coordinated activity across dozens of interconnected brain areas.

The Step-by-Step Journey: From Sound to Meaning

Groundbreaking research published in early 2026 by scientists at Hebrew University, Google Research, and Princeton University has revealed that human brains process language in a remarkably systematic, layered fashion—strikingly similar to how advanced AI language models like GPT work.

By recording brain activity from people listening to a 30-minute podcast, researchers discovered that meaning unfolds gradually through distinct stages. Early neural responses correspond to basic sound processing, while later brain activity reflects deeper semantic understanding and contextual integration. This staged processing mirrors the hierarchical layers in transformer-based AI systems, suggesting both biological and artificial intelligence converge on similar solutions for language understanding.

Stage 1: Acoustic Processing

Language comprehension begins in the auditory cortex, where incoming sound waves are analyzed for their basic acoustic properties—pitch, volume, duration, and frequency patterns. Within milliseconds, your brain distinguishes speech sounds from environmental noise and begins extracting phonetic features that differentiate one sound from another.

Stage 2: Phonological Recognition

Next, your brain maps these acoustic patterns onto phonemes—the smallest units of sound in your language. This process happens in the superior temporal gyrus, which acts as an interface between raw sound and linguistic representation. Native speakers excel at this because their brains have been trained through years of exposure to recognize the specific phonemic contrasts relevant to their language.

Stage 3: Lexical Access

Once phonemes are identified, your brain rapidly searches its mental lexicon—an internal dictionary containing every word you know. This lexical access happens astonishingly fast, typically within 200-400 milliseconds of hearing a word. The process involves matching incoming sound patterns against stored representations while simultaneously activating semantic information associated with those words.

Stage 4: Syntactic Processing

Your brain doesn't just recognize individual words—it also analyzes how they fit together grammatically. Networks in the left frontal and temporal cortex process syntactic structure, determining relationships between words in sentences. This structural analysis precedes full semantic interpretation, suggesting your brain builds a grammatical scaffold before hanging meaning on it.

Stage 5: Semantic Integration

Finally, your brain constructs meaning by integrating word-level semantics with syntactic structure, contextual information, and world knowledge. This highest level of processing involves widespread brain networks including the anterior temporal lobes, which store conceptual knowledge, and prefrontal regions that maintain working memory and integrate information over time.

The Surprising Role of the Cerebellum in Language

One of the most surprising discoveries in recent neuroscience research is the cerebellum's critical involvement in language processing. Traditionally considered primarily responsible for motor coordination, the cerebellum—that "little brain" at the back of your skull—turns out to play a crucial role in the timing, rhythm, and flow of language.

A January 2026 study published in the journal Neuron mapped language-responsive regions of the human cerebellum with unprecedented precision. Researchers found that the cerebellum helps regulate the timing and sequencing of linguistic operations, much as it does for smooth physical movements. When cerebellar function is impaired, people often struggle with word-finding, constructing complex sentences, and maintaining the natural rhythm of speech.

This discovery reinforces the idea that language processing shares fundamental computational principles with sensorimotor control. Both require precise timing, predictive processing, error correction, and rapid sequencing of complex operations—exactly the specialties of cerebellar circuits.

Context Is Everything: How Your Brain Predicts Language

Your brain doesn't passively wait for each word to arrive and then process it. Instead, it actively predicts what's coming next based on context. This predictive processing dramatically speeds up comprehension and explains why you can often complete someone's sentence before they finish speaking.

Research shows that as you listen to speech or read text, your brain continuously generates expectations about upcoming words based on syntactic constraints, semantic plausibility, and real-world knowledge. When predictions are confirmed, processing is efficient and effortless. When they're violated—as in surprising or ambiguous sentences—additional neural resources are recruited to revise your interpretation.

This predictive nature of language processing explains why skilled readers can read so much faster than they could speak the same text aloud. Their brains anticipate word sequences, allowing them to skip ahead and fill in details automatically based on context rather than laboriously processing every letter.

The Interconnection Between Language and Sensory Experience

Language isn't processed in isolation from other cognitive systems. Fascinating research from 2025 demonstrates that language fundamentally shapes how sensory experiences are organized and retrieved in memory. When you hear or read the word "banana," your brain doesn't just activate an abstract linguistic representation—it also activates visual areas associated with the color yellow and the curved shape of bananas.

This deep integration between language and perception occurs through neural pathways connecting language regions in the temporal cortex with sensory association areas. Studies of stroke patients with damage to these connecting pathways show impaired ability to recall object properties like typical colors, despite having intact visual processing. This suggests language serves as an organizational framework for storing and accessing perceptual knowledge.

The finding has profound implications: language isn't merely a communication tool but a fundamental cognitive scaffold that structures how we mentally represent and navigate our world.

Bilingualism: A Window Into Language Processing

Studying bilingual and multilingual individuals provides unique insights into how the brain handles language. Remarkably, bilingual brains don't maintain completely separate systems for each language. Instead, both languages are active simultaneously, even when only one is being used at the moment.

Brain imaging studies show that bilingual individuals develop stronger connections between language control regions and core language networks. These enhanced connections allow bilinguals to manage cross-language interference and switch flexibly between languages. The experience of learning and using multiple languages literally restructures brain connectivity patterns, creating more efficient pathways for linguistic processing.

This neural plasticity continues throughout life. When adults learn a second language, their brains show measurable changes in white matter connectivity within months of intensive practice. The changes are most pronounced in pathways connecting frontal control regions with temporal language areas, reflecting the increased cognitive demands of managing multiple linguistic systems.

From Neurons to Conversations: The Complete Picture

Understanding language requires appreciating multiple levels of organization, from individual neurons firing in specific patterns to large-scale networks coordinating across the entire brain. Recent advances in recording technology have allowed researchers to track this process with unprecedented detail.

At the neuronal level, scientists have identified specialized cell populations that respond selectively to specific linguistic features—some neurons fire preferentially for nouns versus verbs, others for animate versus inanimate objects, still others for syntactic boundaries between phrases. These populations are organized hierarchically, with earlier processing stages responding to simpler features and later stages integrating information across multiple dimensions.

At the network level, different brain regions communicate through synchronized oscillations in their electrical activity. When you understand a sentence, populations of neurons in frontal, temporal, and parietal regions synchronize their firing patterns, allowing information to flow efficiently between regions. Disruption of these synchronized patterns—as occurs in certain neurological conditions—can severely impair language function despite intact individual brain regions.

Practical Applications: Technology That Understands Language

Understanding how the brain processes language has practical implications far beyond neuroscience laboratories. This knowledge informs the development of more effective language learning methods, better treatments for language disorders, and increasingly sophisticated language technologies.

For professionals working with language—translators, linguists, language teachers—this research emphasizes the importance of context, prediction, and multimodal integration in comprehension. Effective language teaching should engage multiple sensory systems, provide rich contextual support, and encourage predictive processing rather than rote memorization of isolated rules.

Modern translation tools leverage insights from neuroscience to better support language professionals. For instance, the SDL Studio Converter from Linigu recognizes that translators need to see linguistic content in familiar, accessible formats to process it efficiently. By offering free conversion of SDL Trados files into Word or Excel formats with instant registration, the tool removes technical barriers and lets language professionals focus on the cognitive work of understanding and rendering meaning across languages. This aligns with neuroscience findings showing that reducing processing friction—making information easier to access and manipulate—allows the brain to dedicate more resources to higher-level linguistic analysis.

When Language Processing Goes Wrong

Studying language disorders provides crucial evidence about normal processing. Aphasia—language impairment following brain damage—reveals the specialized contributions of different brain regions. Broca's aphasia, resulting from frontal lobe damage, primarily affects speech production and grammatical construction while leaving comprehension relatively intact. Wernicke's aphasia, from temporal lobe damage, produces fluent but often meaningless speech with severe comprehension deficits.

These dissociations demonstrate that language comprehension and production rely on partially separable neural systems, though they're normally tightly integrated. Understanding these systems has led to more targeted rehabilitation strategies that work with the brain's natural plasticity to restore function after injury.

Developmental language disorders—difficulties acquiring language in childhood—highlight the importance of specific neural circuits and developmental windows. Early intervention is crucial because the young brain's remarkable plasticity allows alternative pathways to compensate for impaired networks in ways that become increasingly difficult as the brain matures.

The Evolution of Language Understanding

The neural architecture supporting human language didn't emerge from nothing. Comparative studies with other primates reveal that humans share basic auditory processing systems with monkeys and apes, but our species developed specialized expansions and modifications that enable uniquely human linguistic abilities.

Key differences include enhanced connectivity between temporal and frontal regions, expanded working memory capacity, and more sophisticated motor control for speech articulation. Perhaps most importantly, humans developed the ability to recursively combine linguistic elements—embedding phrases within phrases to create infinite expressive possibilities from finite building blocks.

This evolutionary perspective suggests that language processing leverages and extends pre-existing sensorimotor circuits rather than representing an entirely novel cognitive system. The brain repurposed existing machinery for perception, action planning, and sequential processing to support the new communicative demands of language.

The Future of Language Neuroscience

We stand at an exciting moment in understanding how brains process language. Advanced neuroimaging techniques, massive datasets from naturalistic language use, and sophisticated computational models are converging to provide unprecedented insight into the neural code underlying human communication.

Emerging technologies promise even deeper understanding. Brain-computer interfaces that decode intended speech from neural activity could help people who have lost the ability to speak. Real-time brain imaging during natural conversation reveals the dynamic coordination required for turn-taking, topic tracking, and social inference. Machine learning models trained on both language data and brain recordings are beginning to predict neural responses to novel sentences with surprising accuracy.

These advances will continue transforming how we treat language disorders, teach second languages, and build technology that understands human communication. Most fundamentally, they're revealing the remarkable biological implementation of our most distinctively human cognitive achievement.

Conclusion: The Marvel of Effortless Understanding

Every conversation you have, every book you read, every text message you send represents an extraordinary accomplishment of neural computation. Your brain seamlessly coordinates dozens of regions, processes information at multiple hierarchical levels, predicts upcoming input, integrates linguistic content with world knowledge, and makes it all feel utterly natural.

Recent research has dramatically expanded our understanding of this process, revealing that language processing involves whole-brain coordination rather than isolated specialized modules, unfolds through systematic hierarchical stages similar to artificial neural networks, depends critically on predictive processing and context, deeply integrates with sensory and motor systems, and exhibits remarkable plasticity throughout life.

Perhaps most remarkably, despite this complexity, most of us master language without formal instruction, through simple exposure and social interaction. The human brain appears exquisitely tuned by evolution to extract linguistic structure from experience and build the rich communicative capacity that defines our species. Understanding how it accomplishes this feat remains one of the most fascinating challenges in contemporary neuroscience.

---

Work smarter with translation files

Convert SDL Trados files instantly with Linigu's SDL Studio Converter

Free registration • Instant Word/Excel conversion • Built for language professionals